Google has decided to further improve its search engine and offer users a new feature that can help them find what they are looking for, although they themselves are not sure what it is.

It sounds complicated, but imagine a situation in which, for example, you would like to buy a similar sweatshirt online, but in a different color or with a different application on the front.

You don’t know the exact name of the sweatshirt model, for example, but you know you like the cut. Or you would like to find new wallpapers for the living room that would have exactly the same pattern as the book you just bought. So, the pattern from the book is just across the entire wall of your living room. So who would manage to google it?

It seems that such acrobatics will now be possible and that the only limit will be your imagination and perseverance. Fortunately, the solution devised by Google is much simpler than this explanation.

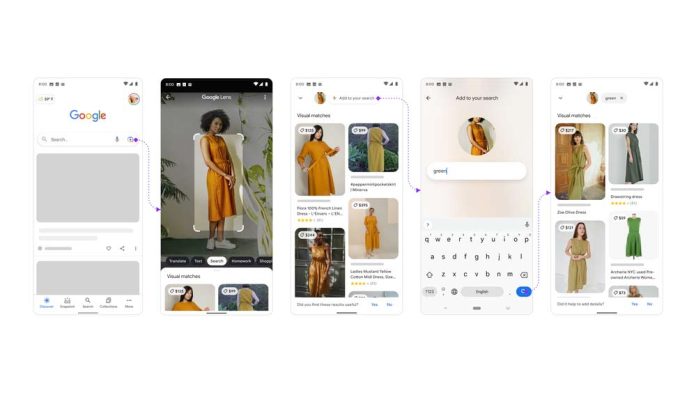

A user just needs to do is to take a picture of a particular object or use a picture of that object from the web, and then further clarify the search by entering a word or text phrase that would accompany that picture.

For example, the word “green” could go with the picture of the above-mentioned sweatshirt, if you wanted that color, and you could supplement the pattern from the book with the word “wallpaper”. So a little visual a little text and users should come up with results they weren’t even aware they could dig into the backwaters of the internet.

As of yesterday, Google has launched a beta test for this feature in the United States, and this capability has been integrated into the popular Google Lens app. The new feature is descriptively called Multisearch and should be available on both iOS and Android operating systems when it is officially available to users.

For now, the core of using this functionality is online shopping, which is really what users would use it for the most. However, once fully integrated into Google Lens, Multisearch could greatly facilitate and improve your search when you’re not sure exactly how to get certain information or items.

One of the examples given by Belinda Zeng, the manager of this project, is a picture of beautifully manicured nails, after which the user would enter the word “tutorial”. This way, users could find a detailed explanation of how they too could have just such a manicure on their hands.

For now, the functionality of this search is still simple and comes down to items in other colors and various patterns that would be transferred to other items. Although, we have no doubt that the future brings even more sophisticated Multisearch.

READ MORE:

- 100th edition of Google Chrome has been released with a redesigned logo

- How to restore the display of dislikes on YouTube?

- What is the best-selling phone in 2021?